Teaching robots to dance

“When you work with someone you know, you benefit from mutual understanding,” says Wenlong Zhang, an assistant professor of systems engineering in the Ira A. Fulton Schools of Engineering. “You do things well together because you have learned how the other person thinks and moves.”

Such nuanced communication is complex and dynamic. It requires interpreting others’ intents and actions in real time. It also is far beyond the capabilities of current robots working, for example, alongside people on factory assembly lines or in hospital operating rooms. Consequently, the scope of physical coordination among humans and robots is limited.

“Many existing robots are not really collaborative,” Zhang says. “They are sidelined with predefined tasks. There is no back and forth. It’s unilateral and production-focused. By contrast, bilateral, interaction-focused work among robots and humans could yield much higher efficiencies.”

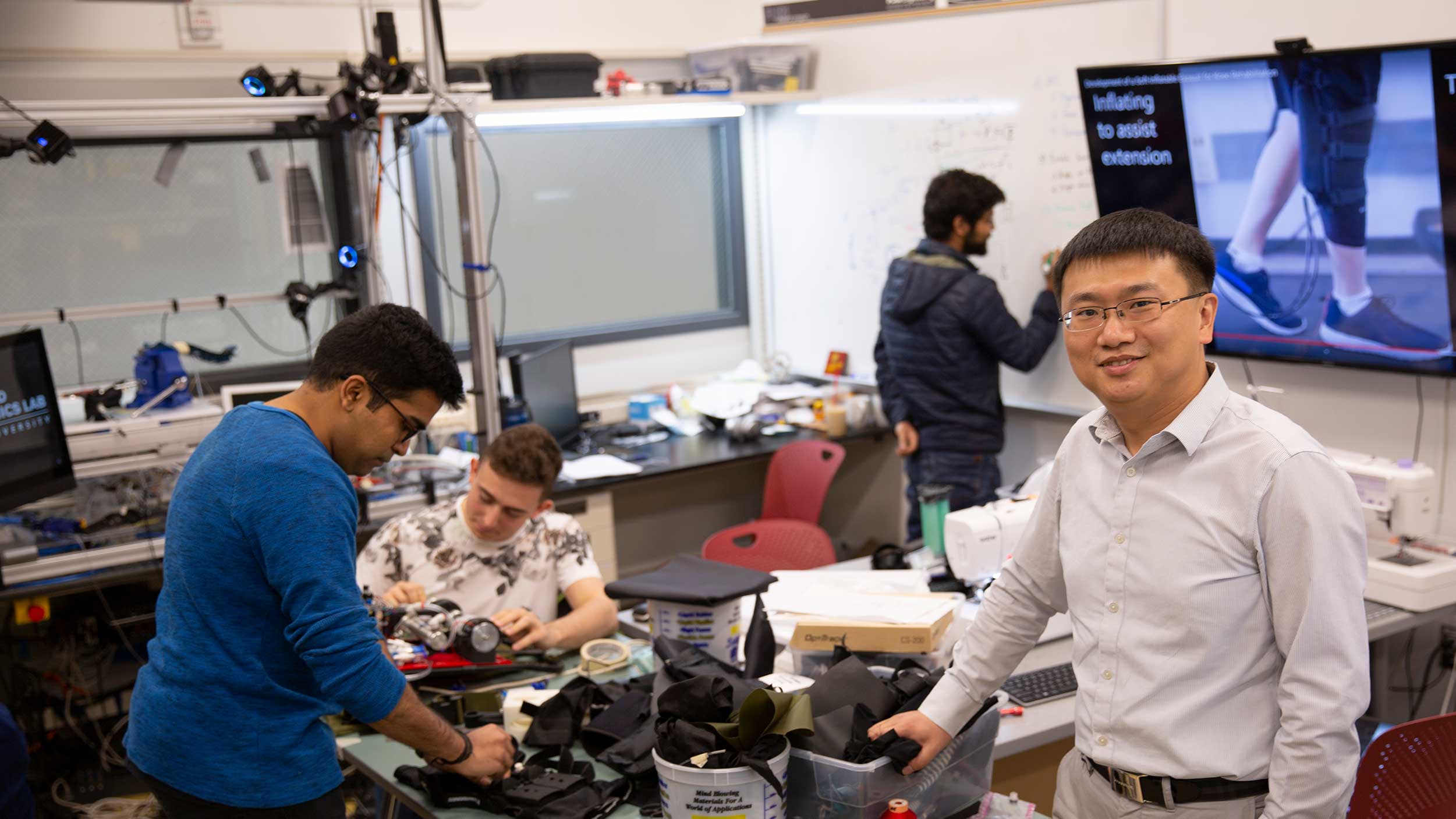

Zhang and his team at Arizona State University’s Robotics and Intelligent Systems Laboratory, or RISE Lab, want to expand the scope of teamwork between humans and robots. They intend to do so by developing algorithmic models of human cognition and motion that robots can apply to anticipate and react to human behavior in novel ways.

Zhang’s vision has captured the attention of the National Science Foundation, which selected him for a 2020 Faculty Early Career Development Program (CAREER) Award. This recognition is reserved for researchers who show the potential to be academic role models and to advance the missions of their organizations. CAREER awards provide approximately half a million dollars over five years to further each recipient’s research.

For Zhang and his team, advancing the possibilities for physical collaboration between humans and robots begins with a finite application: an intelligent, motorized knee brace to support stroke survivors in rehabilitating their walking ability. This focus reflects previous work that Zhang has done with the Barrow Neurological Institute in Phoenix.

“Think about a physical therapist working with a patient,” he says. “The patient knows why her leg is being manipulated in a particular way. She understands the intent of the therapist, and the two of them can work effectively together.”

But if a current robot were tasked with assisting a human in a similar manner, Zhang says, there would be significant confusion. “On the one hand, the patient likely would have no idea what the robot is doing to help,” he explains. “And on the other hand, the robot could not understand the intent of the patient’s actions. Successful interaction of this kind is both physical and cognitive — like a dance.”

Helping humans and robots learn to “dance” in the context of Zhang’s knee brace or motorized exoskeleton requires developing and testing baseline models of human body motion and human cognitive states when working in direct physical contact with a robot.

Of course, there is wide variability to consider. “We need biomechanical data on sitting, standing, walking, using stairs and more,” Zhang says. “We also need baseline models for people of different heights, weights and ages. Even educational backgrounds matter. A mechanical engineer and a landscaper, for example, will interact with a robot in very different ways.”

Zhang is hopeful that the initial rounds of this research will permit testing with stroke rehabilitation patients during the next five years. “Anyone can have a stroke,” he says. “So, this work at the nexus of the mind, muscle-motor function and machines is a challenge with broad potential impact.”

Zhang also highlights other possible innovations. “We can talk about driver assistance systems for older people who may start to lose their focus or who experience weakening musculoskeletal systems,” he says. “Then there are potential applications such as exoskeletons to support soldiers on the battlefield. A deeper understanding of physical collaboration between humans and robots can be leveraged for many new opportunities.”

Gary Werner

Science writer, Ira A. Fulton Schools of Engineering

(480) 727-5622 | [email protected]

More news from ASU Engineering

13 ASU engineering faculty earn the prestigious NSF CAREER Award

ASU, Banner Health team up to ease COVID-19 patient isolation

Engineering students supply PPE to health care providers

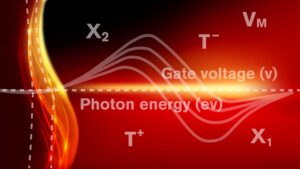

Shedding new light on nanolasers using 2D semiconductors

Technology engineered at ASU 50 years ago helps battle COVID-19 infection